Designing AI Assistance Without Breaking Trust

How I helped cybersecurity admins write complex identity expressions easier, faster and safer with and without generative AI.

Context

This case study focuses on introducing AI-assisted code generation into IBM Verify, an enterprise identity and access management platform.

Verify relies on Common Expression Language (CEL) to define identity logic such as attribute transformations, access policies, and payload handling. While CEL is powerful, it is also difficult to write and maintain — especially for administrators who are not CEL experts.

The product direction was to explore how natural language prompting could help users create CEL scripts more efficiently, while still operating within the constraints of an enterprise security system.

Why does this matter?

"AI introduces a tension between speed and trust in security-critical workflows"

Introducing AI into identity and security workflows creates an inherent tension:

- Speed: AI can significantly reduce the time it takes to write complex logic

- Trust: Identity systems demand correctness, transparency, and user accountability

In this context, a small mistake can break authentication flows or introduce security risk.

Admins are ultimately responsible for the logic they deploy — which makes blind automation unacceptable.

Meet Scott

Scott works in Cybersecurity for a large retail company. He is the Access Management administrator and is responsible for the day-to-day tasks that keep the company’s infrastructure and apps secure, compliant, running and useful to others.Most of his day is spent using IBM Verify.

But Scott has a difficult task: he often needs to write CELx to create custom identity attributes, which is like trying to communicate in a country where nobody speaks your language and the only dictionary you can find is incomplete. For Scott and everyone on his team, CELx scripting is really hard.

My Role

I was the product designer on this initiative, responsible for shaping the end-to-end user experience of AI-assisted CEL generation. My responsibilities included:

- Designed the end‑to‑end user experience, from early concepts to refined prototypes.

- Brainstormed and developed conceptual ideas to support and guide user research.

- Created low‑ and high‑fidelity prototypes for testing, reviews, and stakeholder alignment.

- Collaborated closely with developers, engineers, project managers, and the broader cross‑functional team to ensure feasibility and clarity.

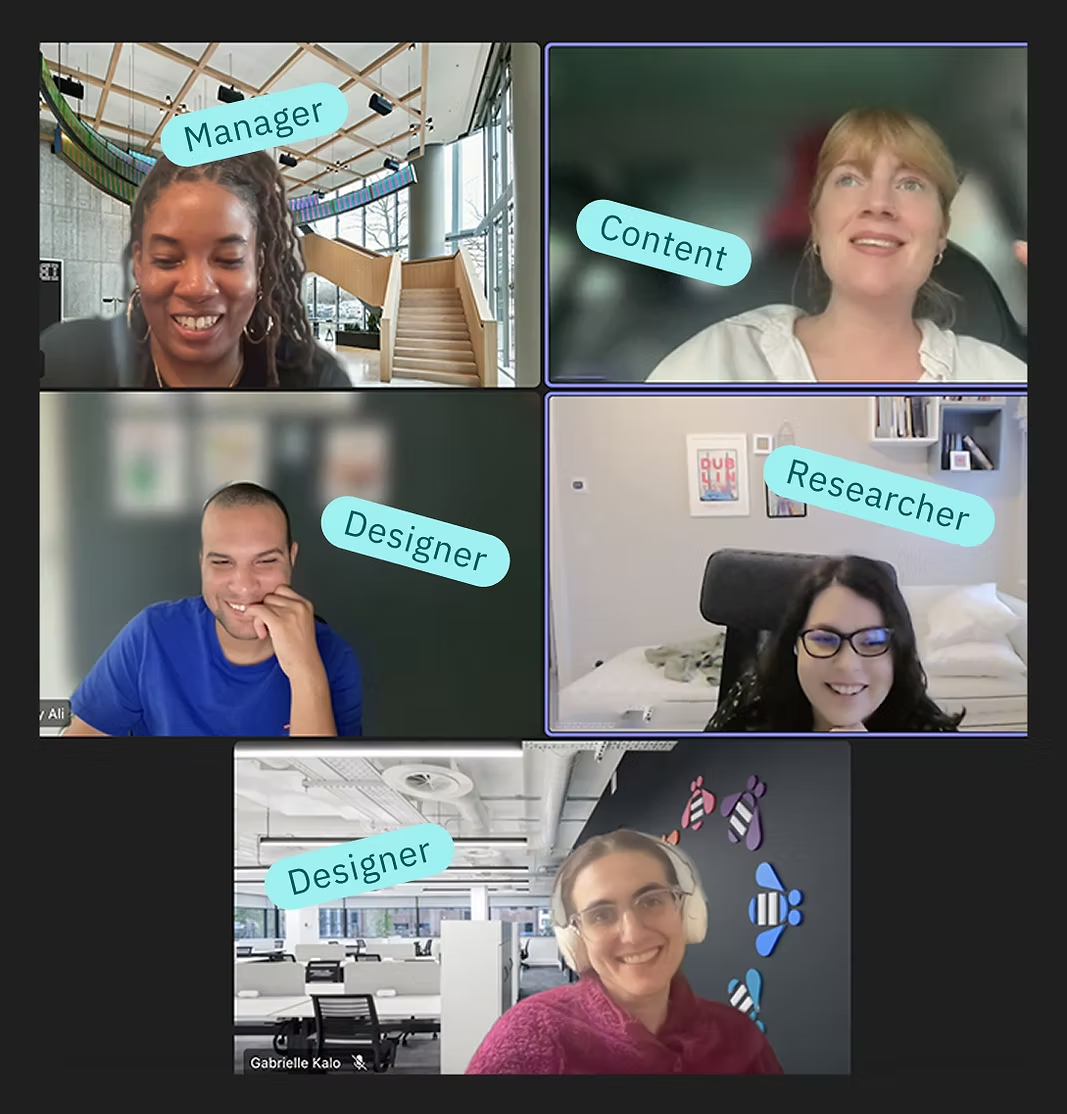

Gen-AI Team

This project began with a request from the sales team to add generative AI to Verify.

After all, everyone else was adding AI to their products, so of course we needed it too. How hard could it be?

At first, everyone assumed that using AI to create difficult code would obviously be useful. However, as the project continued, we realised that the opportunities and the challenges were larger than we expected

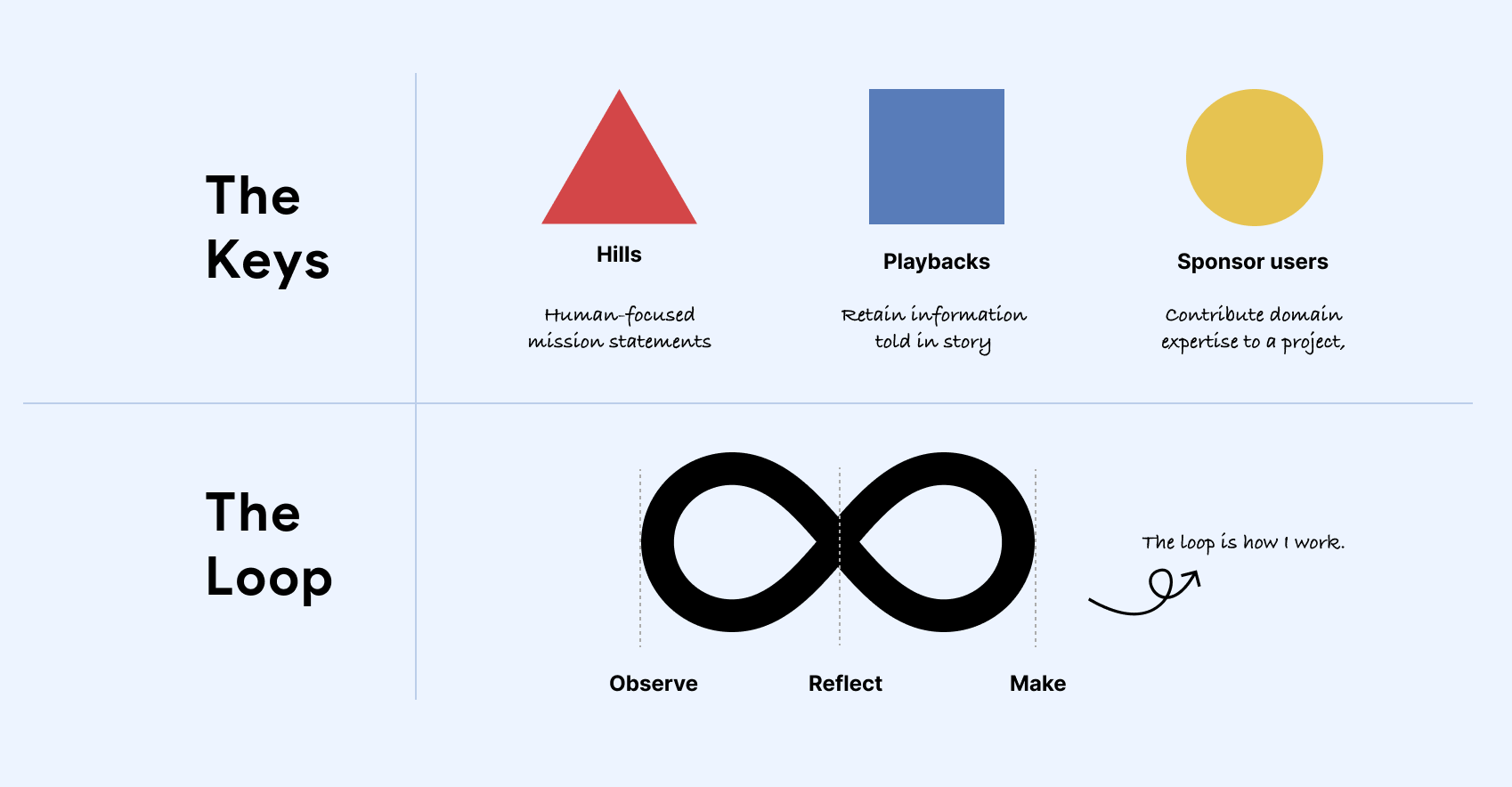

How I framed and worked through the problem

"I focused first on aligning the team on the right problem before designing solutions."

Before moving into solutions, I focused on aligning on the right problem and reducing risk early. I work iteratively — observing real constraints, reflecting on tradeoffs, and making focused decisions — while using clear alignment tools to keep the team moving in the same direction. This approach helped us navigate ambiguity early and move into execution with confidence.

Hills

We used clear, human-focused problem statements to align on what actually needed to change before committing to solutions.

Playbacks

Regular check-ins helped us reflect on progress, validate assumptions, and ensure we were solving the right problem — not just shipping features.

Sponsor users

Close collaboration with domain experts grounded design decisions in real identity and security constraints.

Understanding the current reality (As-Is)

Before exploring solutions, I focused on understanding how administrators currently create custom attributes and CEL logic in Verify — and where friction, delay, and risk actually occur.

This journey captures a common scenario for identity administrators today. While the goal is straightforward — creating a custom attribute — the path to get there is fragmented and cognitively demanding.

Admins often

- Know what they want to achieve, but not how to express it in CEL

- Rely on searching documentation, internal chat, or colleagues for help

- Iterate through trial and error before arriving at a working solution

Even simple changes can take significant time, introducing frustration and unnecessary dependency on others.

The cognitive load and the time taken to create a custom attribute has left me very frustrated. I feel pressure to learn CEL but there’s very little resources to do so and I don’t have the time! I’ve familiarity with Java and wish there was a way I could’ve simply used that. It would be really useful if something could’ve helped me create a custom attribute faster without having to run around looking for a code. — IBM Verify User

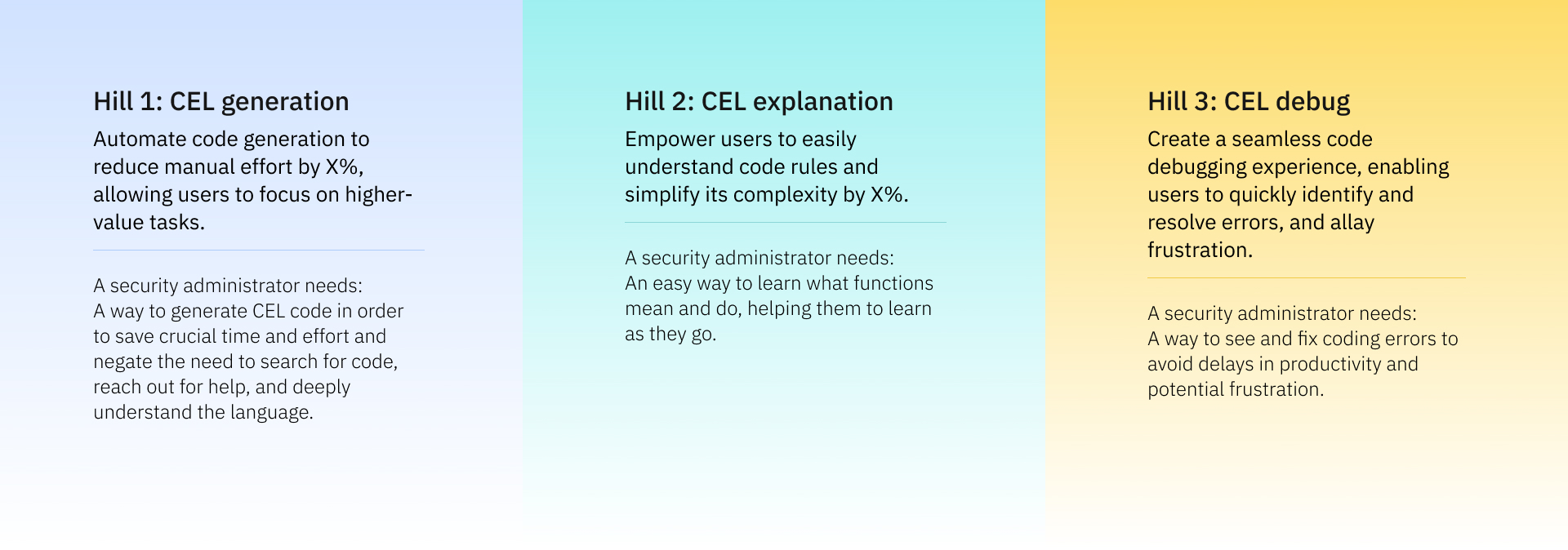

The Hill (Problem Alignment) - Based on these insights, we aligned on a clear Hill to guide the work:

Help identity administrators create correct CEL logic faster, without increasing cognitive load or introducing security risk.

The problem behind the scenes

The Scott persona shows that CELx is hard to learn because it is unique to IBM, with limited documentation and few clear examples. Users often depend on old snippets from past projects, colleagues or online forums, and even small mistakes can break authentication flows or create security risks. They usually know what they want to achieve but struggle to write it in CELx, so the process becomes slow and frustrating.

How Scott feels having to write CELx

Poor documentation

IBM's CELx documentation lacks code examples, making it difficult for users to understand and implement.

Steep learning curve

IBM's custom wrapper around CELx makes it a unique language to learn. As such, users are not familiar with it and struggle to learn it.

Too many workarounds

Users often end up searching for snippets from previous work to write their code, or asking colleagues and forums for help.

Exploring the solution space (Options & Inspiration)

- Investigating how AI-assisted coding tools support generation, explanation, and correction before defining a direction.

With a clear Hill in place, I explored how AI-assisted creation is handled in other code and logic-heavy tools — not to copy patterns, but to understand where assistance is most effective and where it breaks trust.

The goal at this stage was not to design a solution, but to understand the range of possible approaches, their tradeoffs, and where they might fail in a security-critical context. I looked across a mix of internal patterns, adjacent products, and developer-facing tools to understand how others support complex logic creation without removing user control.

What we learned Across these tools, three recurring forms of assistance stood out:

- Generation: helping users get started or translate intent into code

- Explanation: making generated or existing logic understandable

- Fixing: supporting correction, refinement, and iteration

Notably, tools that leaned too heavily on generation without explanation or control often felt unpredictable or unsafe — especially in more complex or high-stakes workflows

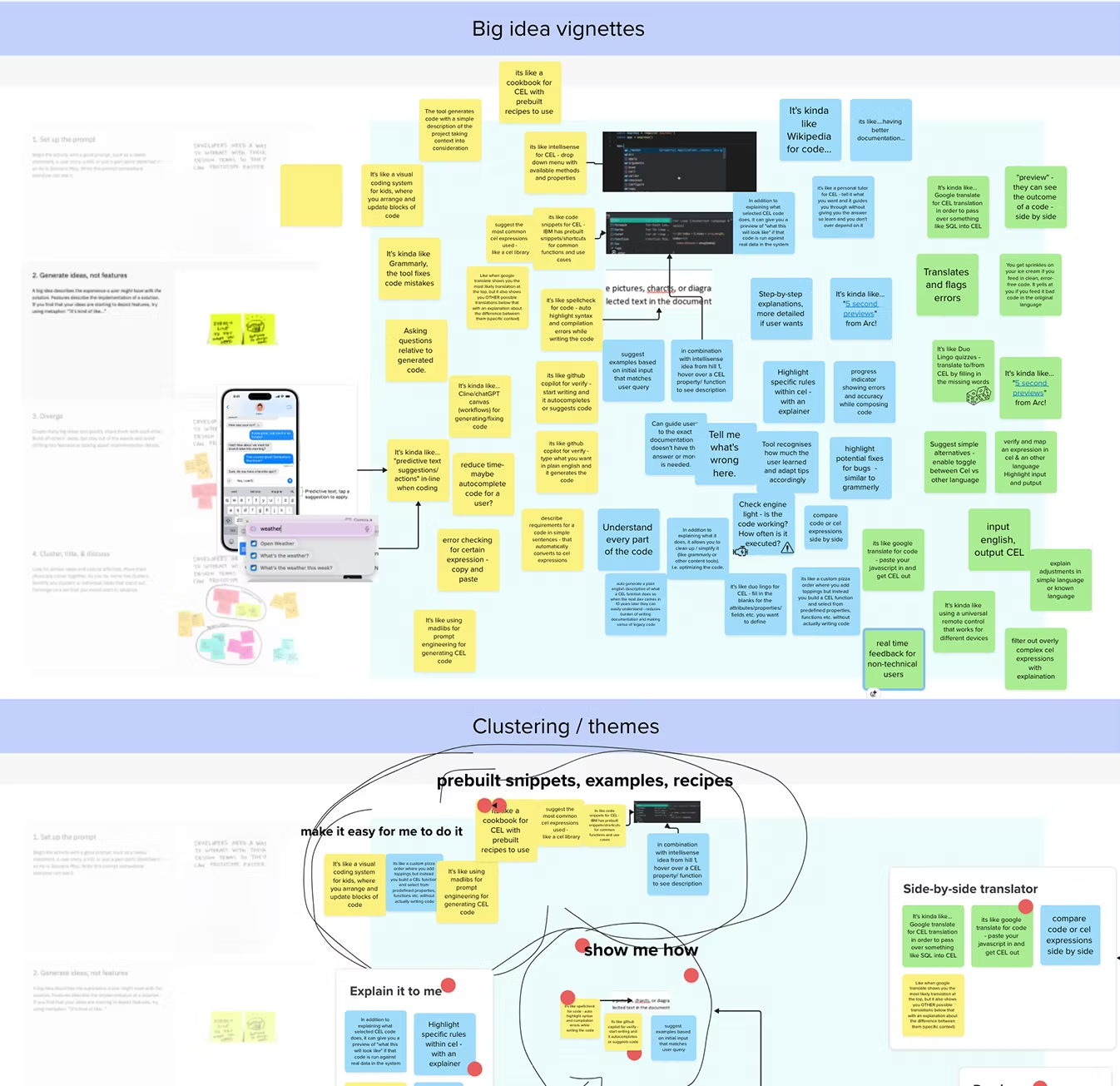

Early designs chaos

With the as-is scenario defined, the competitive analysis completed, and a clear understanding of the problem, we conducted a workshop to brainstorm some ideas.

We moved on to sketching low-fidelity ideas. We focused on exploring one of the possible entry points for CELx to start designing the early concept.There are several entry points for CELx in Verify, but they all lead into just two user interfaces

Early design exploration; Low-fidelity concepts

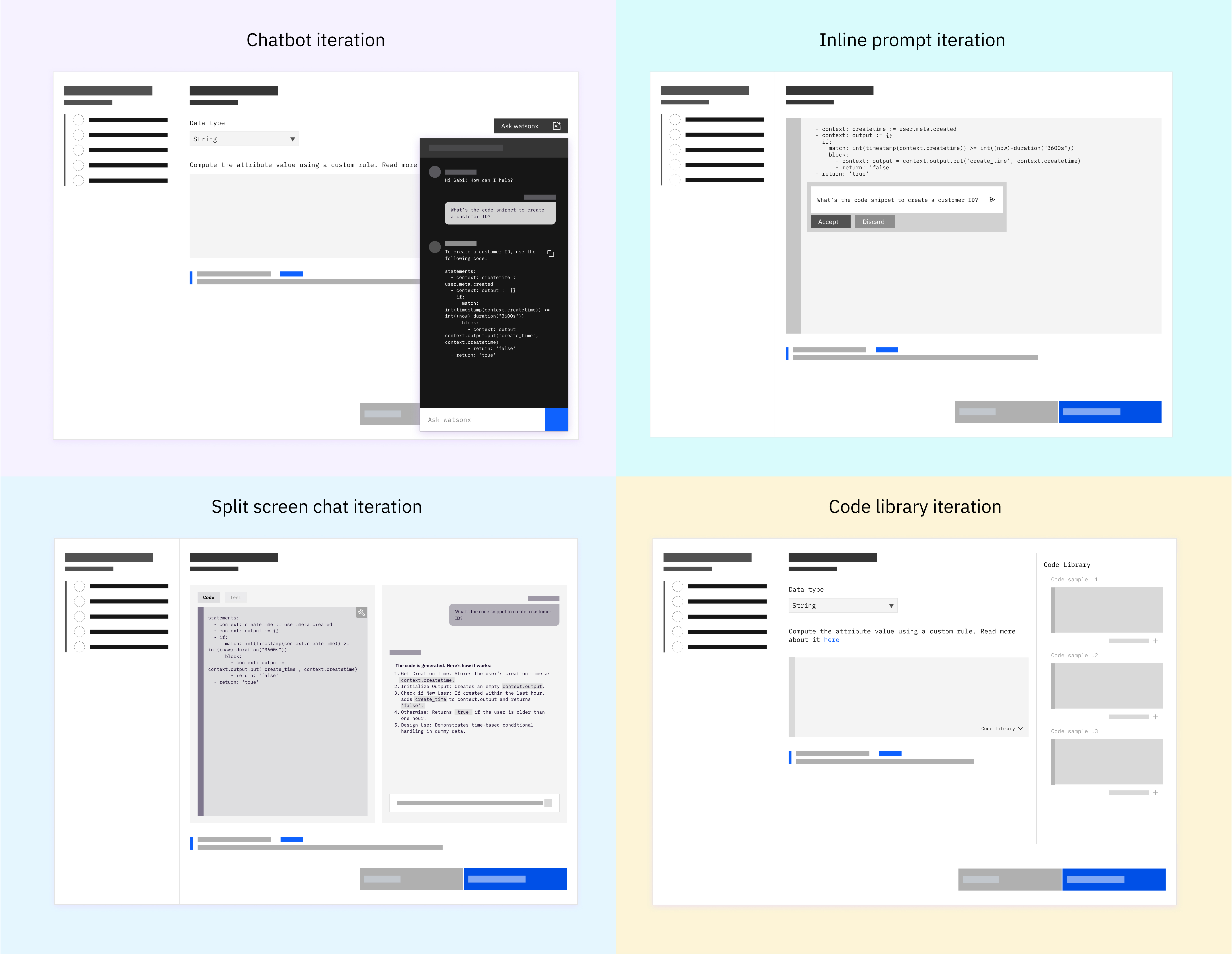

With a clearer understanding of how AI assistance could support creation — generate, explain, and fix — I moved into early design exploration to test how these ideas might translate into real workflows inside Verify.

I explored multiple low-fidelity concepts to test how AI assistance could fit into the CEL authoring workflow. Each concept intentionally varied how and when AI appeared, how much context it exposed, and how much control users retained. At this stage, the goal was not polish, but to explore interaction models, validate assumptions, and surface tradeoffs early.

The goal was not to design a single solution, but to understand which interaction patterns felt supportive versus risky in a security-critical environment.

User Feedback That Shaped the Direction

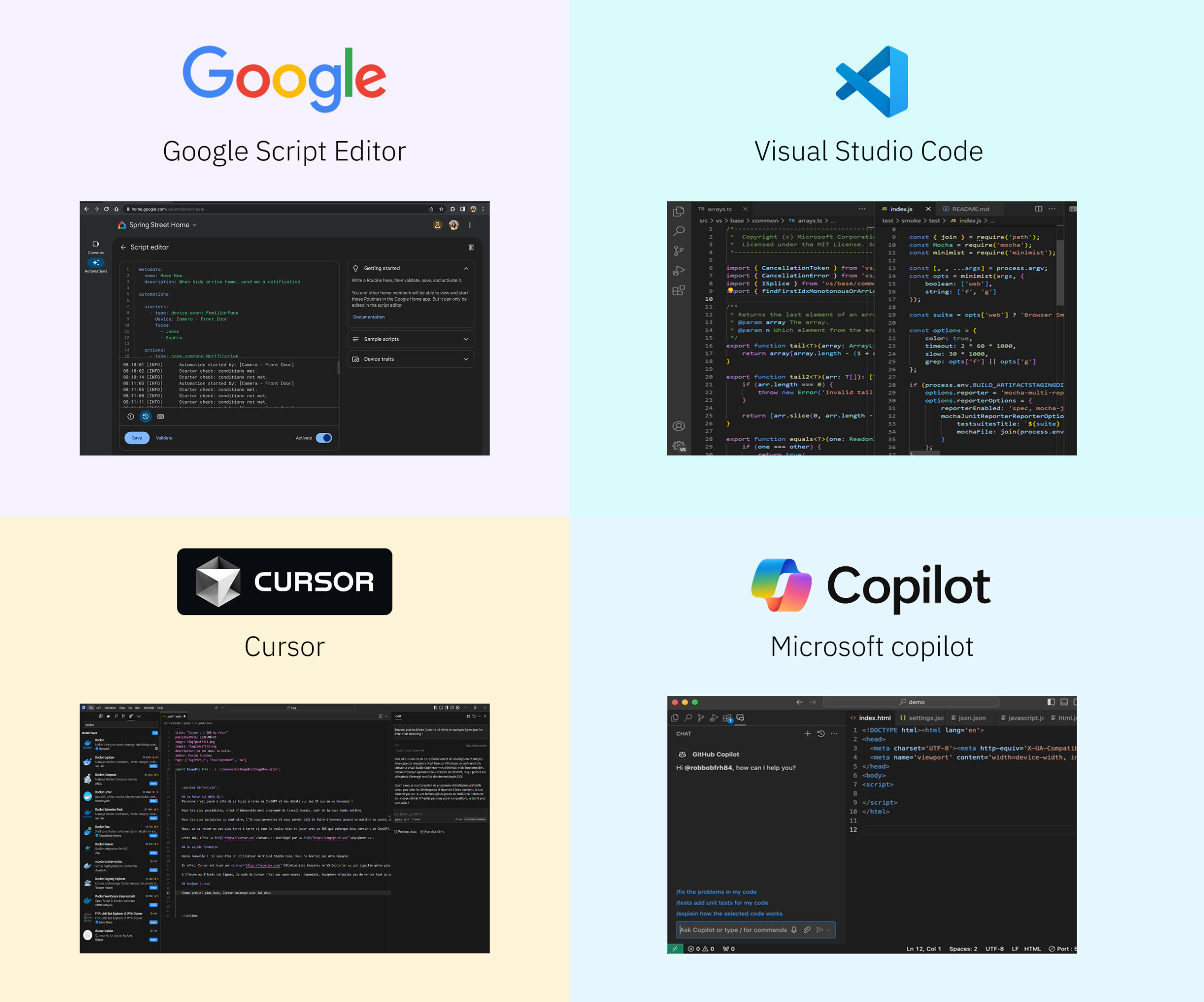

Before converging on a final direction, I validated early concepts with identity administrators to understand whether the proposed AI assistance felt useful, trustworthy, and appropriate for a security-critical workflow. The goal was not to test UI polish, but to validate value and viability of the interaction model.

Research objectives :

- How do users (or user proxies) respond to early solution concepts?

- Assess the perceived value of multiple, lo-fidelity solution concepts with Verify users or user proxies (i.e. internal IBM SMEs)

- Understand how the solution concepts fit into actual user workflows (i.e. how well would it meet their needs?)Identify design considerations that we may not have thought of already (i.e. what’s missing from these ideas?)

Key validation signals

Across sessions, a few clear signals emerged that guided convergence:

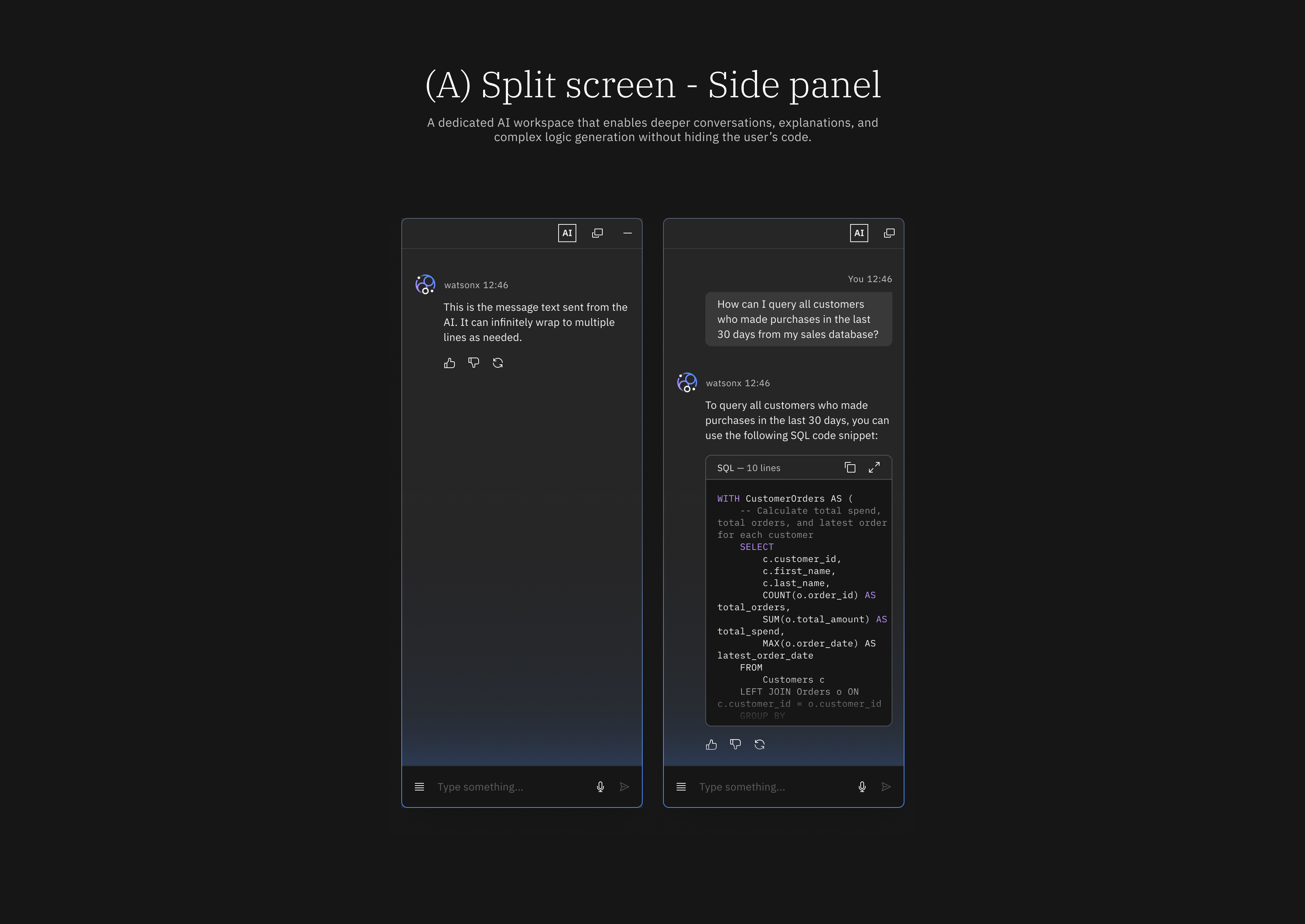

- Split-screen experiences increased confidence : Users preferred keeping generated CEL visible and editable alongside explanations.

- Inline and chat-only prompts felt lightweight but limited: These approaches were fast, but often lacked sufficient context for complex logic.

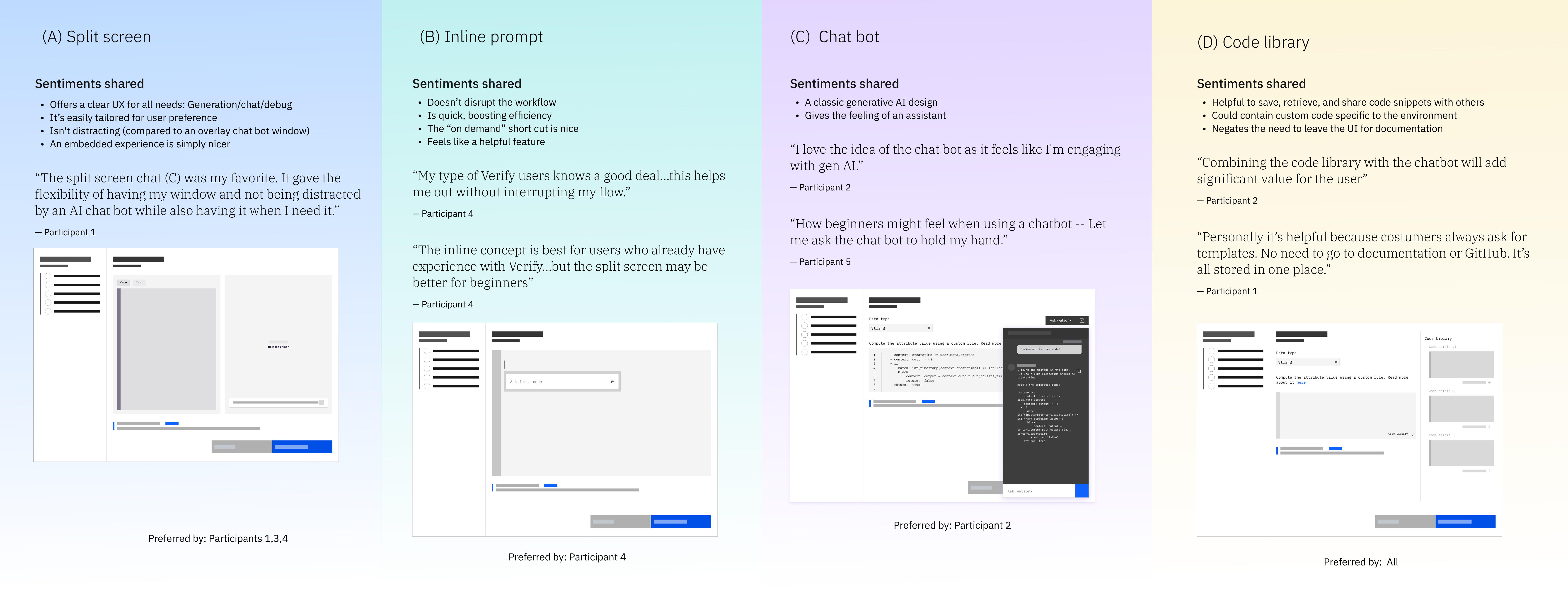

How we chose the final design

- How exploration and user feedback guided the final direction.

Based on exploration and validation, the goal was not to pick a single interaction pattern, but to combine the strengths of multiple approaches while avoiding their weaknesses.

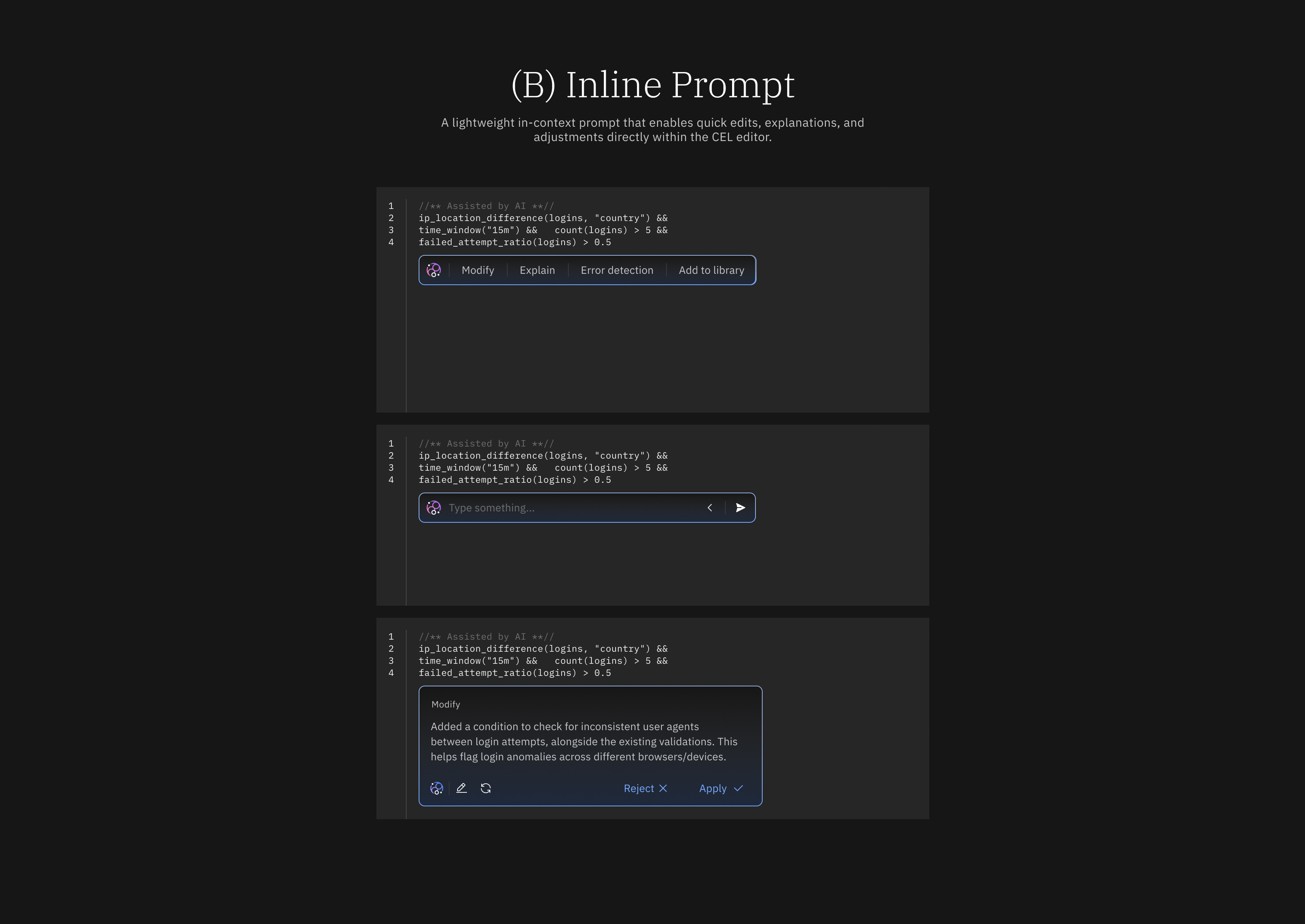

another option; rather than choosing between split screen ( side panel ) or inline prompting, we intentionally designed a solution that blended both — supported by a reusable code library. Informed by this research, our solution combines concepts; split screen (A) and inline prompt (B).

- With a dual panel, the split screen offers clarity and space, allowing AI to compliment a workflow and not obstruct it.

- The inline prompt offers quick action for when the full chat experience isn’t required.

- Further, all participants valued the library as a tandem to generative AI. It’s addition adds that extra bit of panache and ingenuity to the primary solution.

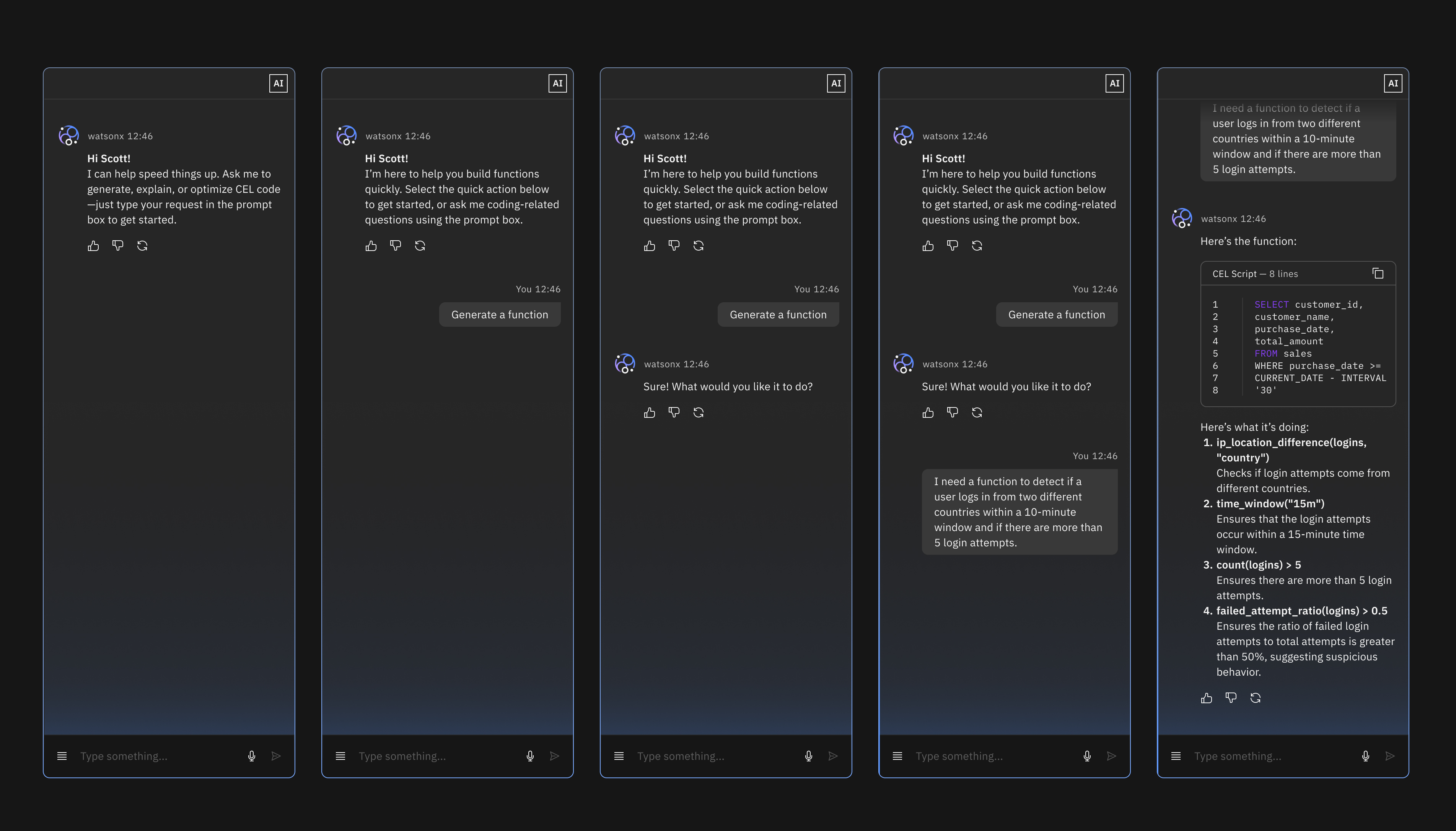

High-fidelity design ( Generate, Explain, and Fix )

The final experience introduces AI assistance into Verify through three complementary surfaces: a chat panel, an inline prompt, and a reusable code library.

Each surface serves a different moment of need, but all are designed around the same goal:

helping users create, understand, and refine CEL logic without losing control or trust. Rather than forcing a single interaction model, the experience adapts to user intent and task complexity.

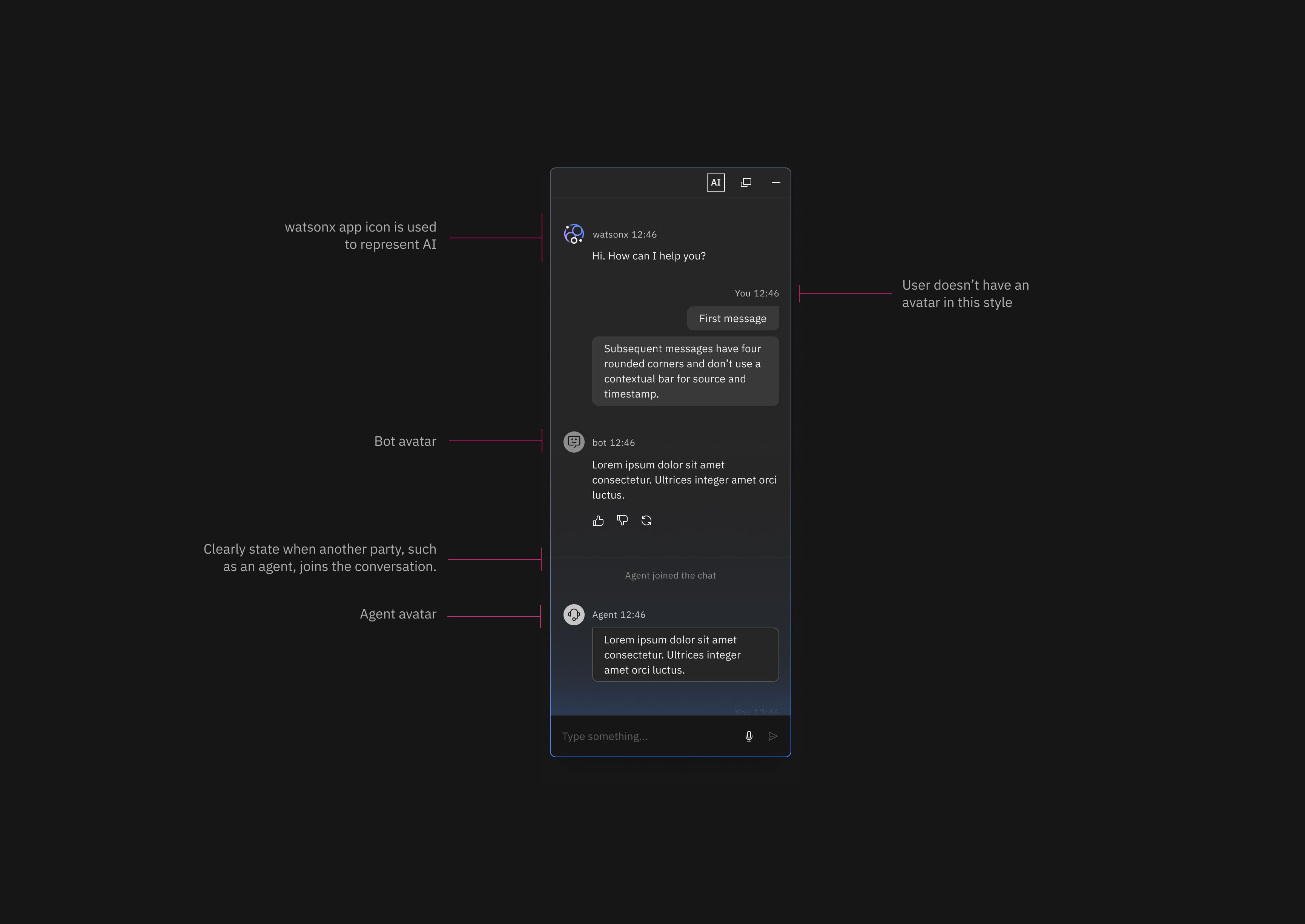

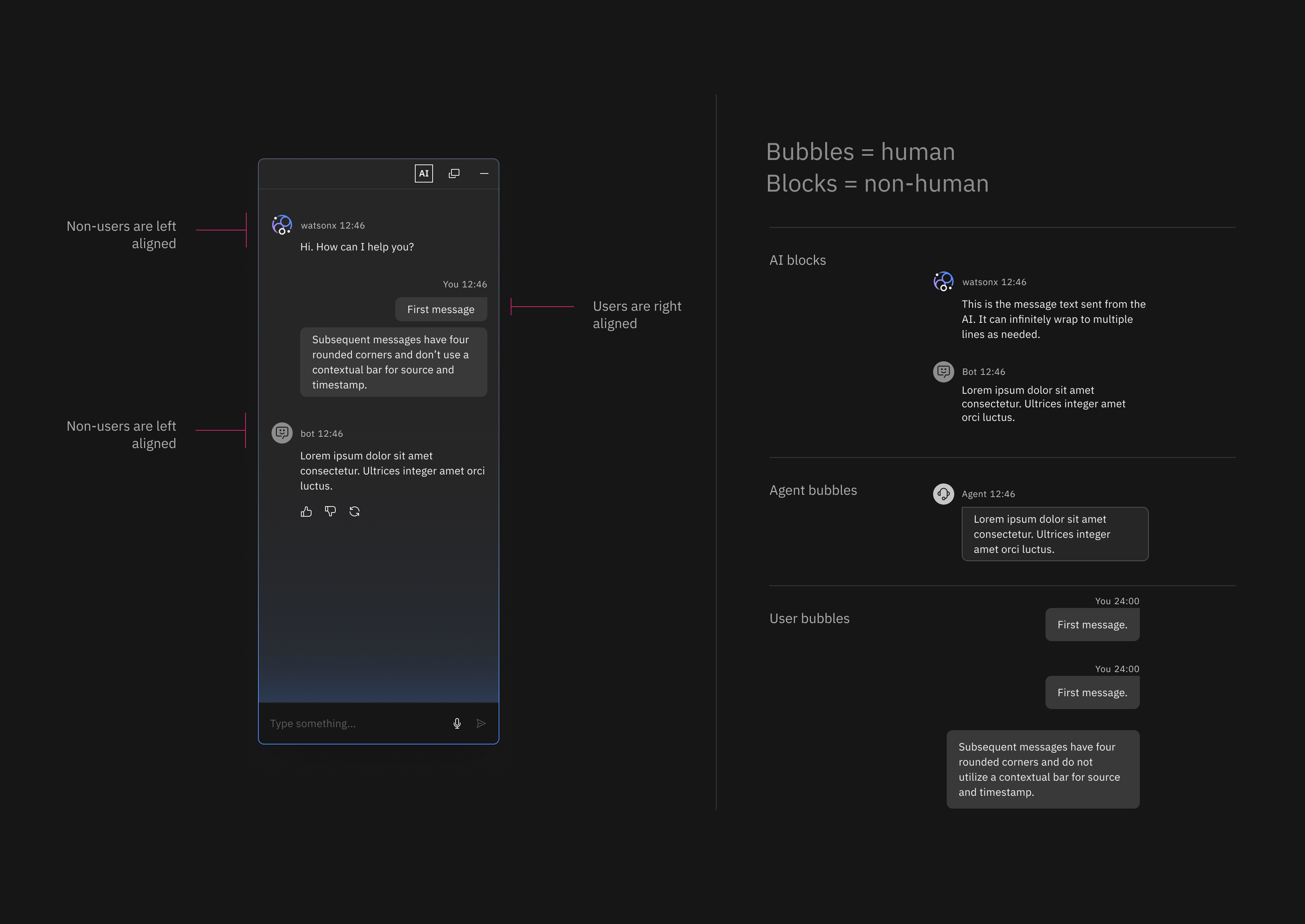

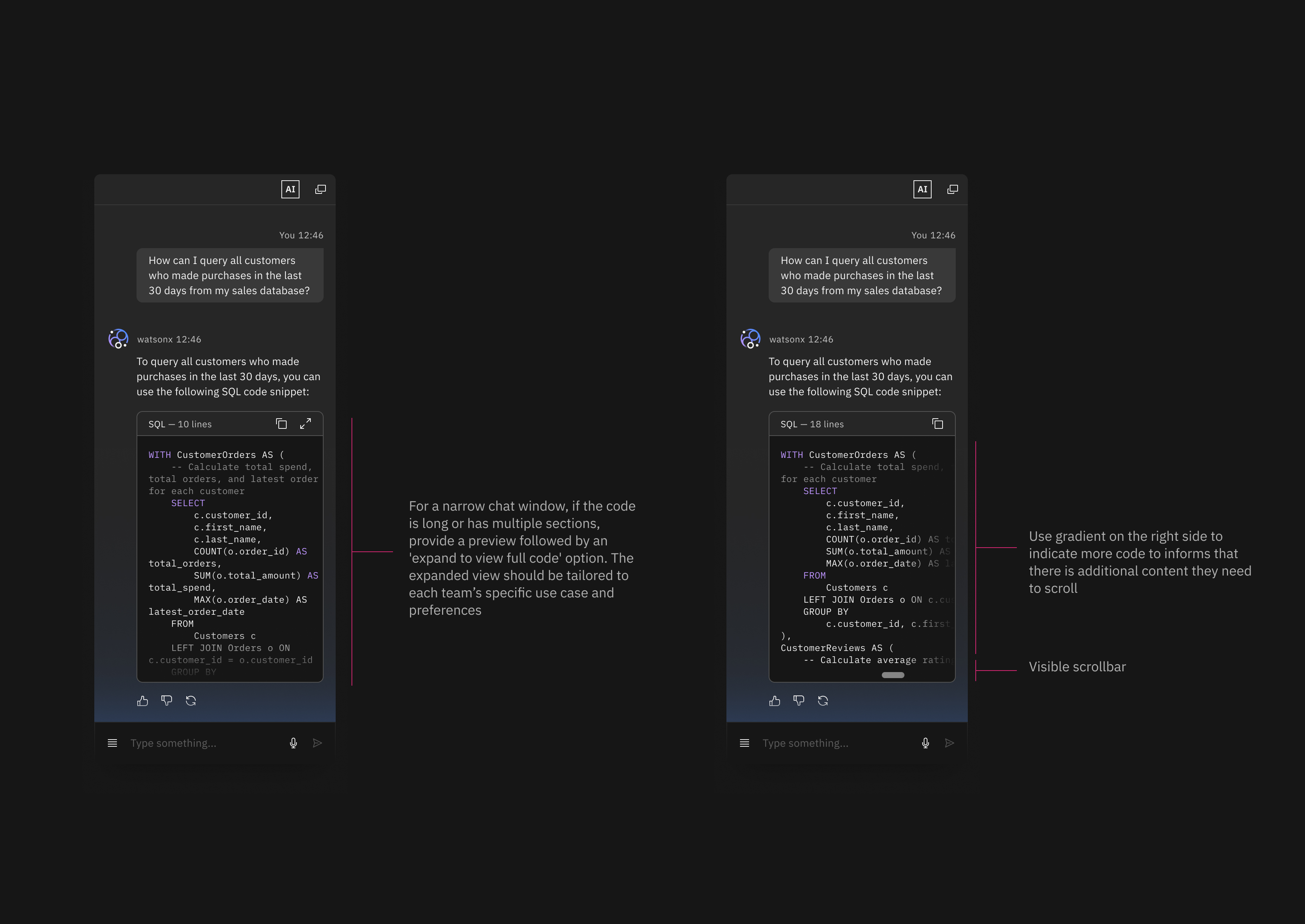

The chat panel provides a dedicated space for more complex interactions, such as generating CEL logic, asking for explanations, or fixing existing expressions. I translated the interaction concepts into high-fidelity UI aligned with IBM’s Carbon Design System, defining the detailed behavior and structure of the AI chat experience. The design clarifies different participants in the conversation by distinguishing AI (Watsonx), the user, and human agents through icons, message styles, and labels.

Inline prompt — Fast, lightweight actions

The inline prompt offers a quicker, more lightweight way to access assistance when a full chat interaction isn’t required. Inline prompts allow users to move quickly while still benefiting from AI support.

I designed the inline prompt as a lightweight entry point for AI assistance directly within the CEL authoring workflow. This interaction allows users to request quick transformations or adjustments without opening the full chat interface, supporting faster, focused actions. By embedding the prompt in context, the design minimizes disruption while enabling users to benefit from AI assistance for simple tasks, small edits, or repetitive operations.

Final Experience AI Assistance for CEL Authoring

After exploring multiple interaction models and validating them with users, the final design brings AI assistance into the CEL authoring workflow through two complementary surfaces: a split-screen side panel and an inline prompt.

How this resolves the original tension

This solution resolves the tension between speed and trust by treating AI as adaptive support rather than a single, authoritative feature. By distributing assistance across a chat panel, inline prompts, and a reusable code library, users can choose the level of help they need without losing visibility, control, or accountability. Logic is never hidden, understanding is always accessible, and correction is an expected part of the workflow. As a result, AI accelerates CEL creation while reinforcing confidence in what gets deployed — supporting faster outcomes without compromising the integrity required in identity and security systems.